Students at a Washington Heights elementary school were frustrated with Eric Adams’ school food cuts. But their advocacy had a bigger impact than bringing back their favorite chicken dish.

Mayor Eric Adams has insisted all families who want spots in the city’s preschool programs would receive them, despite budget cuts to early childhood education.

Council members questioned officials as the looming expiration of federal COVID relief money threatens to shave $808 million from the Education Department’s budget.

In P.S. Weekly’s food episode, fourth graders visit NYC schools’ test kitchen, high schoolers rate grilled cheese sandwiches, and students dish on having microwave access.

If restorative justice funding is cut, advocates worry schools will increasingly resort to suspensions instead of alternatives like peer mediation.

Under state law, schools must conduct at least four lockdown drills each year. Lawmakers and advocates say that’s “excessive and ineffective.”

Before the pandemic, at least 137 schools serving roughly 70,000 students did not have a school nurse, according to one estimate.

Spinning up a virtual learning program would be optional, and the plan does not force principals to choose any specific method for achieving the new caps.

The protest was a sharp contrast to the congressional hearing earlier in the day that focused almost exclusively on the experiences of Jewish students and educators.

‘There have been unacceptable incidents of antisemitism in our schools,’ Banks told members of Congress. But he also defended the record of the nation’s largest school system.

This episode of P.S. Weekly is dedicated to inspiring educators as we celebrate Teacher Appreciation Week.

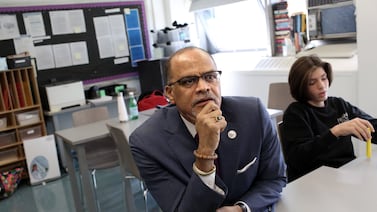

Chancellor David Banks is set to testify at a congressional hearing on antisemitism in K-12 schools, facing the committee that recently grilled the presidents of elite colleges.

New York City’s teachers union is ratcheting up the pressure on the Education Department to comply with the state class size law.

Students presented their ideas for dealing with the teen mental health crisis, bias toward immigrants, and rats at a youth version of the famous Aspen Ideas Festival.

The announcement set off alarm bells for school integration advocates, who worry it could roll back progress diversifying several high-demand schools.

Banks previews the message he plans to take to Congress for a hearing on responses to antisemitism in school.

As NYC students figure out college plans, many are scrutinizing how administrators respond to campus activism.

Mayor Eric Adams and top police officials continued to claim, with little evidence, that “outside agitators” were behind the encampments.

Become a Chalkbeat sponsor

Find your next education job.

A trip to the Arctic inspired Brooklyn Prospect High School’s Caitlyn Homol to create a unit exploring “the relationship between motivation, action, and climate attitudes.”

“It's a fundamentally wrong and unfair practice,” one student said, calling it “affirmative action for the wealthy.”

About 8% of New York City students opted out of the state’s reading test last year, roughly double the pre-pandemic rate.

More school buildings were impacted by Tropical Storm Ophelia than previously known — and the city comptroller faulted the city’s communication during the storm.

The smaller budget is largely the result of expiring federal relief dollars, and Adams’ proposal saves a slew of programs that were on the chopping block.

A Manhattan parent board’s nonbinding resolution to revisit school guidelines on transgender girls' participation in sports raised alarms among trans students and their allies.

The new financial aid application was supposed to be ‘faster and easier.’ For me, it has been anything but.

“This decision making was clearly rushed,” one lawmaker said. “It's not best practice, but this is where we are.”

By far, this marks the city’s largest commitment to date to replace the dwindling pandemic aid.

Almost 75% of the city’s high schools do not have student publications, according to a 2022 study.

Black and Hispanic students have historically had far less access to sports. The situation has led one school’s dean to file a federal civil rights complaint.

Studies show students who complete federal financial aid applications are far more likely to attend college.

One is participating in an intensive apprenticeship program at Bloomberg and the other dashed off 23 college applications.

Schools are supposed to give parents of students in temporary housing free MetroCards each month. But problems with distributing them are leading to absences and fare evasion tickets.

“There’s still time to see if we can get this worked out,” Gov. Kathy Hochul said of her push to include New York City’s mayoral control governance system in the budget.

The ‘Youth Civic Hub,’ an online portal launched on Friday aims to increase youth civic engagement and electoral participation.

Deputy Chancellor Dan Weisberg made the comments after a Brooklyn superintendent suggested his district, which includes affluent neighborhoods, would have flexibility with the curriculum mandate.

The city school system, like districts across the country, has dealt with a surge in tensions following Hamas’s Oct. 7 attack on Israel, and Israel’s subsequent bombardment of the Gaza strip.

The literacy overhaul has enjoyed support from many advocates and experts. But will the momentum last as NYC expands its reading instruction shift?

This episode of P.S. Weekly focuses on New York City’s complex special education system and challenges students face getting accommodations like extra time on exams.